How to decide your PostgreSQL backup strategy?

Do you know what type of your PostgreSQL backup strategy exist and how you could chose the right one for your Mission critical Production database?

Backing up your database is one of the essential requirements to safeguard yourself and to recover the database from catastrophic situations like server-crash, database-crash, or corruption. It doesn't matter whether you are running your database on docker ,VM or cloud Backing up a database is important. Having said, deciding backup and recovery strategy for organization or individual can be a pain point. It certainly requires an understanding of your application, business, and cost. Let's learn where to start and how to choose a PostgreSQL backup strategy.

Let's dive in, Here is a situation:

I have an eCommerce application that has PostgreSQL as a backend. The database size is not large it's around 100GB. Most of our users are active till late evening at 7PM and it's mostly not being accessed 24 hrs. We take a daily full logical backup every night at 12 AM, it takes around 2 hrs of time which is reasonable and then we run some daily database operations.

Monday morning at 10AM, we had a system crash, data disk is gone. The only option we had was to recreate the database from scratch and restore it from backup using the logical backup. It took around 3 hrs to restore the database. We did some housekeeping, basic functional testing and application was up for the users at 2 PM.

Let me emphasize on 2 points:

The database was restored from previous night backup. 10 hrs of data loss. --> Does it matters how much data you lose or till what point you were able to recover?

4 hrs of application downtime. --> How fast you can recover your database and bring the application online?

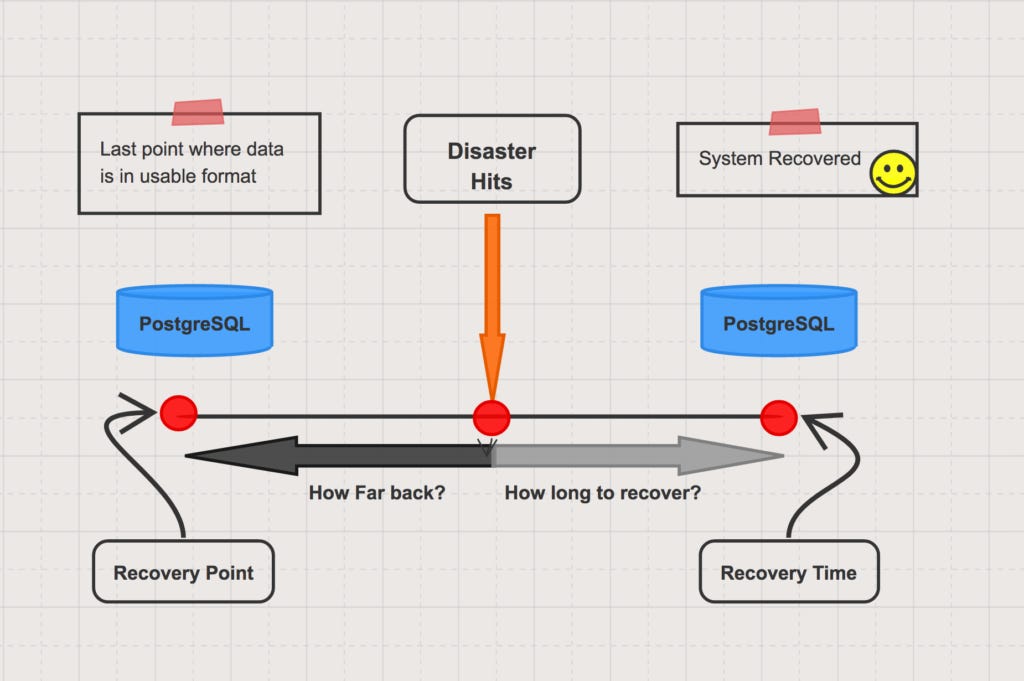

What is Recovery Point Objective(RPO) & Recovery Time Objective(RTO) ?

Recovery Point Objective is a measurement of the maximum tolerable amount of data to lose. It also helps to measure how much time can occur between your last data backup and a disaster without causing serious damage to your business. RPO is useful for determining how often to perform PostgreSQL backup.

RPO is significant because in most cases, you will likely lose some data when a disaster occurs. Even data that is backed up in real-time has a risk of some data loss. Most businesses back up data at fixed intervals of time -- once every hour, once every day or perhaps as infrequently as once every week. The RPO measures how much data you can afford to lose as the result of a disaster.

For example, imagine that you back up your data once every day at midnight and a disaster occurs at eight in the morning. In that case, you would lose eight hours’ worth of data. If your RPO is twenty-four hours or longer, you’re in good shape. But if your RPO is, say, four hours, you're not.

Recovery Time Objective(RTO):

Recovery Time Objective is a metric that helps to calculate how quickly you need to recover your Application(App + Database) and services following a disaster in order to maintain business continuity.

RTO is measured in terms of how long your business can survive following a disaster before operations are restored to normal. If your RTO is twenty-four hours, it means you’ve determined that the business can maintain operations for that amount of time without having its normal data and infrastructure available. If data and infrastructure are not recovered within twenty-four hours, the business could suffer irreparable harm.

Depending upon what is your objective(In terms of RPO and RTO) you have to decide your backup strategy. One of the key thing to remember is that your PostgreSQL backup strategy include:

Method to take a backup(Online, offline, logical)

Frequency of the PostgreSQL backup (Weekly, Daily hourly)

In the scenario which I have mentioned if we wanted to recover the database with minimum data loss we could have used below strategy

Enabling Archiving(Storing WAL to a safe location)

Online full PostgreSQL backup (PITR) with incremental/archive backup.

Weekly full backup

Daily incremental-Midnight

We could have used Point In time recovery by restoring the database from a full backup and then applying incremental(Archived WALs) to the latest point.

Most common PostgreSQL backup Strategy(based upon environment)

Being Database engineering for most of my professional experience I have realized that its NOT wise to define your backup strategy just based upon the size of the database. While it's good idea combine both online and logical(pg_dump/pg_dumpall) for certain situation, however it should be thought through what type of data it is and how much you are ready to lose in case of catastrophic situations.

Weekly online backup and daily incremental backups for mission-critical and production databases.

Logical backup for non-critical or development databases- Frequency - Weekly or bi-weekly.

Alternate Backup methods

One of the most common and most used methods is to take online backup is pg_basebackup or performing the file system level backup(copy data directory) after putting DB on backup mode. However, a disk-level snapshot is another way if you are using the storage manager for your underlying disk. Volume snapshots are much faster and extremely useful if you have a very large database size (2+ Terabyte)

How to reduce RPO and RTO?

Now we all understand the use of RPO and RTO and how important it's for any business or operations. Below are some most common and best practices on the database level to reduce your RPO and RTO:

Synchronous replication with Automatic failover: If you can't afford to lose data(Mission-critical applications, Banking Industries, Financial institutions) having synchronous PostgreSQL replication certainly helps and it also ensures that you have committed copy of data(Depending upon the Sync mode you choose) on the standby node. In case of any catastrophe, you can do an automative/manual failover to standby and significantly reduce RTO and RPO. This method doesn’t ensure zero data loss until you design your application to wait for commit on the database before completing the transaction. This approach of PostgreSQL sync replication can also add an additional overhead of application performance.

Asynchronous replication with Automatic failover: Streaming replication is asynchronous by default, in which case there is a small delay between committing a transaction in the primary and the changes becoming visible in the standby. This delay is however much smaller than with file-based log shipping, and also depends upon the load/transactions and network bandwidth. With streaming replication, archive_timeout is not required to reduce the data loss window. We have also seen customers using standby with 24 hrs of delays to handle certain recovery situations, where the user has accidentally dropped a table and delayed standby helped to recover it.

recovery_min_apply_delay = '1h'Consideration of the Disaster Recovery site: PostgreSQL does allow you to create a Disaster Recovery site to another region by using WAL. This is particularly important to ensure that you have the most updated copy of your database in another region or data center in case of any disaster scenarios where the complete data center is gone or not accessible. You may or may not be able to take hit on data loss(Recovery Point Objective) however RTO will be reduced even the site is completely gone.

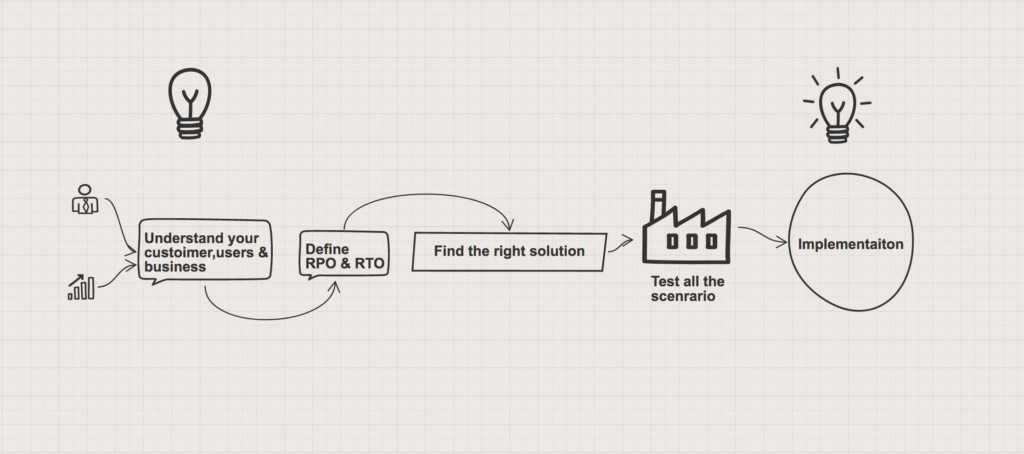

Conclusion:

The key to a successful backup strategy to understand end-users and businesses. It will help you understand how important your data is & how faster you want to make your system available in case of any catastrophic situations. This helps us to define the RTO and RPO. Finding the right solution whether it's backup, standby or any DR strategy and to make sure it's tested properly and finally implementation.